Recently, I watched a presentation by Amy Herman — an art historian who trains the FBI, CIA, and Navy SEALs — and it struck me that the industry has been looking at the problem of AI all wrong. The automotive and insurance domains are imbued with a fundamental misconception: that the act of recording an image is synonymous with understanding it. As a solution architect and senior technology consultant, I have spent decades analyzing the mechanics of complex systems, from the intricacies of software architecture to the high-stakes environments of intelligence and national security. In her stunning talk, “A Lesson on Looking” (video below), Amy explains that visual intelligence isn’t just about eyesight; it’s about overriding the brain’s tendency to “autolook” — to label things quickly and move on. She shows a picture of what looks like a grandfather clock covered in a white sheet. Your brain says: “Clock. Sheet. Rope.” But if you truly look , you see the rigidity of the folds, the texture. It’s not a clock at all. It’s a sculpture carved entirely from wood.

This is the exact battle we fight every day at Click-Ins. The AI industry is obsessed with “prediction.” But as the CTO of Click-Ins, I prefer a quote often attributed to Lao Tzu:

“Those who have knowledge, don’t predict. Those who predict, don’t have knowledge.”

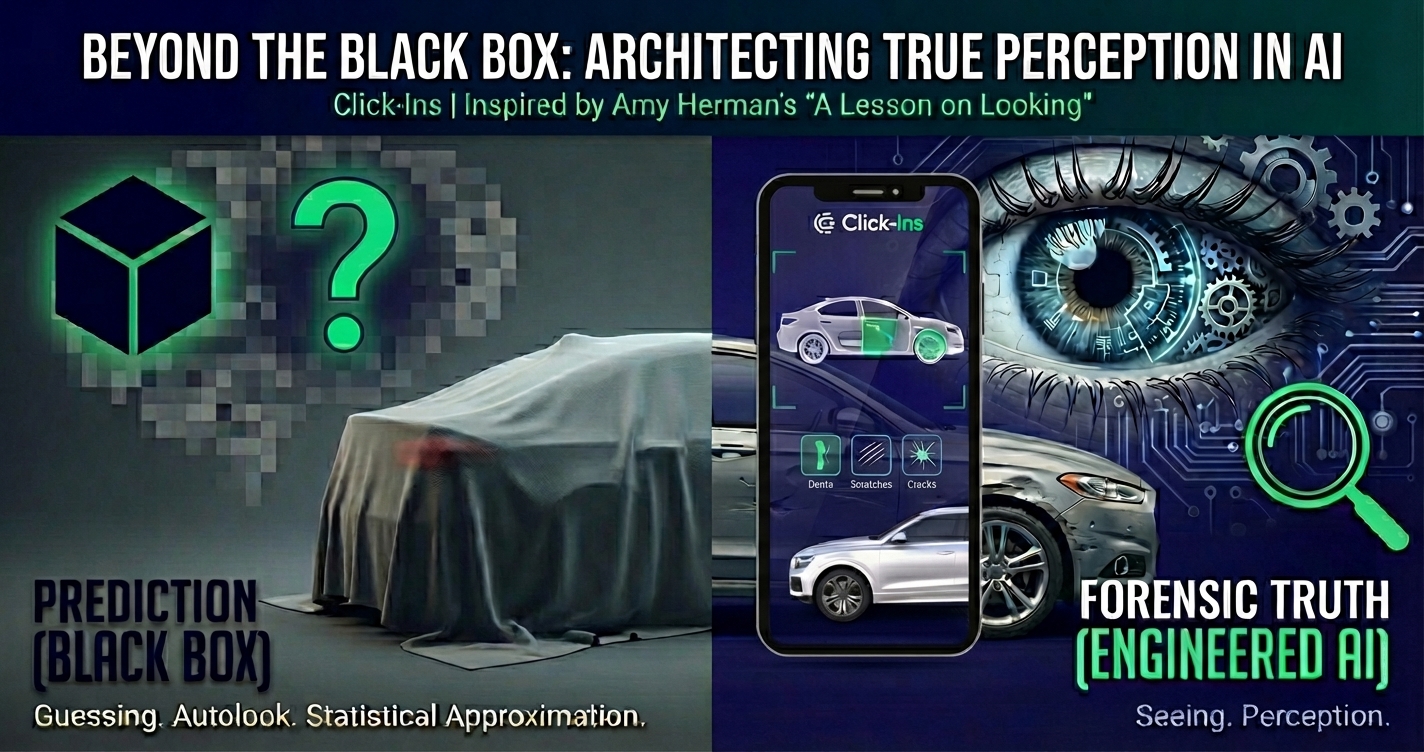

The prevailing industry standard — relying on “black box” deep learning models to statistically guess at the presence of vehicle damage — is a digital approximation of “looking,” not “seeing.”

The profound insights of Amy Herman in her seminal presentation, “A Lesson on Looking,”¹ grounded in the methodologies used by the Department of Homeland Security (DHS), the FBI, and the CIA, have inspired me. Rooted in military-grade intelligence, Click-Ins translates the cognitive frameworks of elite human intelligence officers — concepts like the “Pertinent Negative” and “Situational Adaptation” — into a hybrid AI solution. Let’s explore how true perception is architected, how the rigorous observation of art can inform the precise engineering of artificial intelligence, creating a system capable of the “forensic truth” required by the automotive and insurance industries.

The human brain is an evolutionary marvel of efficiency, yet this very efficiency is its greatest liability in forensic observation. A human brain processes millions of visual stimuli daily and engages in “autolooking” — a subconscious editing process in which the mind categorizes, labels, and discards information to conserve energy.¹ We see a chair, and our brain registers “chair,” ignoring the texture of the fabric, the angle of the leg, or the shadow it casts. In daily life, this is a survival mechanism. In law enforcement, intelligence, and high-fidelity asset inspection, it is a catastrophic failure mode.

Amy Herman posits a radical correction to this biological tendency. She argues that “looking” is a physical act — photons hitting the retina — while “seeing” is an intellectual act — the deliberate interrogation of the visual field.¹ This distinction is the cornerstone of the Click-Ins philosophy. When we built our Visual Intelligence solution, we recognized that standard computer vision systems are designed to “look.” They ingest pixels and output labels based on statistical probability; these systems mimic the lazy brain.

To understand the depth of this problem, consider the “Grandfather Clock” exercise described by Amy Herman. She presents an image that, at first glance, appears to be a standard grandfather clock draped in a white sheet and bound with rope. The brain, seeking the path of least resistance, labels it: “Clock.” “Sheet.” “Rope.”²

The initial label was a lie. The brain hallucinated “cloth” because it expected cloth.

At Click-Ins, we try to solve the “Grandfather Clock” paradox by rejecting the single-glance approach. We combine our ontology of a car with disciplines such as CAD, 3D, Photogrammetry, and Computer Vision to reconstruct the scene, detect all known artifacts, position them, and measure them. We do not guess at the severity of a panel; we measure the damage precisely.

Amy Herman challenges her students, from NYPD captains to corporate CEOs: “Look up from your screens for 15 minutes a day.”⁵ She argues that our addiction to digital feeds degrades our visual acuity, narrowing our peripheral vision and blunting our sensitivity to detail.

Herman’s mandate for “disconnected observation” is paradoxically relevant to our digital solution. In the intelligence community, the goal is to filter the signal from the noise so that the human analyst can focus their “15 minutes” on what truly matters.⁴

The FBI, the Secret Service, and the DHS spend their training hours in the quiet galleries of the Metropolitan Museum of Art or the Frick Collection.⁵ This is exactly where Amy Herman conducts her “Art of Perception” courses.

The logic is sound: Art provides a complex, data-rich environment that is “safe.” If an agent misses a detail in a painting, no one dies. This psychological safety net allows the brain to be retrained without the cortisol-induced tunnel vision of a crime scene.⁶

Why? Because “obviously” is the language of assumption, not evidence. To say, “Obviously, the woman is sad,” is a projection. To say, “The woman is looking down, her mouth is downturned, and she is holding a handkerchief,” is an observation of fact.⁵

This distinction is the bedrock of the Click-Ins’ reporting architecture.

Click-Ins has codified the “anti-obviously” rule into its algorithms, offering measurements rather than opinions. This paradigm mimics the forensic reporting standards required in a court of law, providing the evidentiary basis that insurance carriers require to defend a claim denial or approve a payout.

The above scenario relates directly to our automated guidance. When a user takes photos of a car for a self-inspection, their “body language” (the in-depth image analysis) tells us a story:

Assessment is the gathering of raw data. In a crisis, an agent must assess the exits, the crowd density, and the potential threats before formulating a plan. Herman teaches agents to “slow down” because “the average museum visitor spends seventeen seconds viewing each work of art.”⁴ Seventeen seconds is insufficient for intelligence; it is insufficient for survival.

The Click-Ins Architecture :

Standard vehicle inspections are the “seventeen-second museum visit.” An inspector walks around the car, checks the fuel, and waves it through. Several damages are missed because of the patterned behavior outlined above.

Analysis is the prioritization of assessed data through a framework of knowledge. An intelligence analyst doesn’t just look for anomalies; they map those anomalies against known patterns of behavior. They ask: “Does this visual evidence make sense within the rules of this environment?”

The Click-Ins’ Architecture:

Herman emphasizes that “communication is the hinge” of intelligence.⁶ If an agent sees a threat but communicates it poorly (“There’s a bad guy over there”), the team cannot react. Precision is paramount. The “Salute Report” used in the military (Size, Activity, Location, Unit, Time, Equipment) is a model of articulation.

The Click-Ins Architecture:

We have developed a proprietary ontology, Damage Grammar, if you wish.

This structured output is machine-readable and human-verifiable. It allows for the automated ordering of parts (e.g., “Order touch-up paint code X” vs. “Order replacement bumper”). It transforms the visual data into actionable business intelligence.

The threat landscape is changing daily. Terrorists change tactics; criminals change patterns. An agent who stops learning is an agent who will eventually fail. Herman teaches “Adaptation” as the ability to shift perspective-to see the “familiar” with fresh eyes.⁸

The Click-Ins Architecture:

How does an AI “adapt”? Through Synthetic Data.

Real-world data is static. If we train on data from 2020, our AI might struggle with the design language of a 2024 Tesla Cybertruck (which breaks all standard geometric rules of “car”).

She uses René Magritte’s painting Time Transfixed (La Durée poignardée) to illustrate this. The painting depicts a steam locomotive emerging from a fireplace. The viewer is immediately struck by the absurdity of the train. They list the positive elements: the train, the clock, the mirror, the candlesticks, but they miss the negatives:

Herman notes that “conspicuous absences are only conspicuous to eyes trained to look for them”.¹⁰ An average driver might barely notice a minor dent on a car’s trunk, yet a professional inspector can spot it instantly.

This is because most AI is trained on Object Detection (finding what is there). It is rarely trained on Void Detection (finding what is not there).

The complement to the Pertinent Negative -the missing part-is the Pertinent Positive -the unauthorized, added part. If a system is trained to know the “Platonic Ideal” of a vehicle, it must also be able to detect deviations from that ideal when a component is introduced rather than removed. In this sense, the AI is tasked with Augmenting the Reality for the human observer, highlighting an object that should not exist.

In the insurance and leasing domains, post-market modifications represent a complex, adversarial challenge to asset integrity. While often cosmetic, these “added parts” can have profound legal and financial ramifications:

Just as Click-Ins’ Visual Reasoning Ontology flags the absence of a part, it also flags the presence of an anomalous, non-factory component-a non-standard bumper, an aftermarket spoiler, or a custom exhaust system. Click-Ins’ system identifies this visual intrusion as a disruption of the original design’s truth, providing the forensic evidence needed for an underwriting adjustment or a claim denial defense. The AI does not merely see a spoiler; it sees an unauthorized variable in the risk equation.

The implications of detecting the pertinent negative extend beyond recovering the cost of a cargo cover.

By seeing the void, we properly assess the asset.

Synthetic data is the only way to train an AI model to be unbiased. Real-world data is biased by definition (it only contains photos of accidents that have already happened or images of damages that have already been claimed). Synthetic data allows us to create the “Art” that teaches the AI.

Traditional inspection systems (like the massive drive-through gantries used by some companies) rely on brute force hardware: dozens of high-resolution cameras, laser scanners, and structured light projectors. These are expensive, fragile, and immobile.

Click-Ins replaces this hardware complexity with software intelligence:

By understanding the principles of optics, geometry, and perception, we can extract the same fidelity of data from a $500 phone that competitors extract from a $100,000 gantry. We democratize the inspection process, putting the power of a forensic lab into the pocket of every car dealer, rental agent, and policyholder.

However, in certain real-world applications, a mobile or portable solution is inappropriate because the required workload and task necessitate the use of specific hardware.

Questions for the Reader

As you reflect on Amy Herman’s presentation and the architecture of Click-Ins, I invite you to ask yourself the questions we ask our systems every day:

Conclusion: The Mandate for Truth

At Click-Ins, we have taken this mandate and encoded it into silicon. We have built a system that does not sleep, does not get tired, and does not fall victim to “autolooking.” It treats every vehicle inspection as a trained agent treats a crime scene: with rigorous scrutiny, unbiased analysis, and a relentless search for the truth.

When Eugene Greenberg and I founded Click-Ins, the ambition was never merely to build a scanner. The goal was to build the “Palantir of Insurance.” ³ Palantir Technologies revolutionized intelligence analysis by integrating disparate data streams to reveal hidden patterns. Click-Ins aims to do the same for the physical condition of assets.

Whether you are inspecting a rental car, investigating a crime scene, or just looking at a spreadsheet, ask yourself: Are you seeing what is actually there, or are you just seeing what you expect?

Watch Amy’s presentation below. It might just change the way you see the world. And then, come see how the same principles are saving the automotive industry from the blindness of “Autolooking”.

Watch the Video: A Lesson on Looking by Amy Herman:

Originally published at https://www.linkedin.com.