Key Takeaways:

Claims adjusters spend a significant amount of time manually reviewing photos, often struggling to identify exact damage locations and measurements. A panel segmentation taxonomy for 3D vehicle models changes this by mapping smartphone images to standardized vehicle panel locations, enabling hybrid AI systems to deliver forensic-grade evidence within minutes.

Click-Ins applies this automotive-focused approach to transform standard photos into audit-ready documentation.

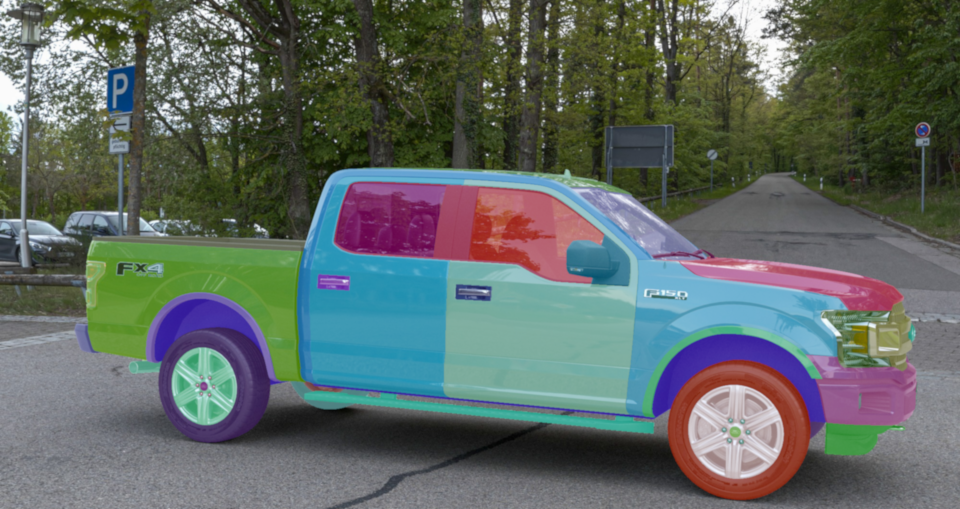

A panel segmentation taxonomy is a standardized framework that defines and categorizes every exterior vehicle panel and sub-panel with precise identifiers. Think of it as a universal language for vehicle parts, instead of one adjuster calling it a "front quarter panel". At the same time, another says "front fender," everyone uses the same precise identifier.

The benefits of a panel segmentation taxonomy become clear when claims teams can instantly understand exactly which part sustained damage, regardless of the vehicle's make or model.

A well-structured taxonomy creates uniform names and boundaries for every exterior panel and sub-panel. Industry research demonstrates that standardized labeling improves model reliability and accuracy across automotive applications. Whether examining a 2015 Honda Civic or a 2024 Tesla Model Y, the same panel gets the same identifier.

This standardization enables reliable detection, measurement, and reporting across all vehicle makes and models, including unreleased ones, without manual translation between different naming conventions.

Beyond uniform naming, this standardization enables precise spatial context. When AI-powered inspection algorithms identify a dent or scratch, the taxonomy maps that finding to the correct panel within the known 3D vehicle geometry. Click-Ins' approach combines 3D modeling with photogrammetry to position damage precisely without reconstructing full vehicle models from photos. This exact positioning supports liability decisions, repair scoping, and subrogation by providing a clear location context that adjusters can trust for coverage determinations.

Standardized panel references eliminate confusion during claim handoffs across the entire process. When a first notice of loss identifies damage to "Panel_ID_47_Front_Door_Outer," desk reviewers and repair partners immediately understand the location and scope. Research on automated damage assessment shows that uniform labeling reduces false positives and supports production-ready workflows. This approach reduces rework, accelerates cycle times, and enables seamless integration with existing claims management systems.

Building an effective panel segmentation taxonomy requires balancing technical precision with claims processing efficiency. These design choices directly reduce claim processing time and improve accuracy by eliminating ambiguity in damage assessment.

These panel segmentation taxonomy best practices reduce manual review time and create consistent, defensible claim decisions. Next, we'll explore how hybrid AI systems use this structured approach to extract precise measurements from everyday smartphone photos.

Modern AI-powered vehicle inspections combine neural network detection with a Visual Reasoning Ontology that validates results against geometric constraints and part relationships. This hybrid approach reduces false positives common in pure deep learning systems by checking each detection against known vehicle geometry and panel connections. When the AI identifies potential defects, the ontology layer ensures the finding makes physical sense, preventing errors like detecting dents on glass panels or scratches that span impossible distances across multiple parts.

Building on this validation framework, photogrammetric measurement techniques extract precise anomaly dimensions by positioning detections relative to established vehicle dimensions rather than reconstructing entire models from photos. Geo-referencing algorithms and self-calibration methods determine spatial relationships between issues and prebuilt 3D vehicle geometry, delivering measurements suitable for claims decisions.

This intelligence-first approach eliminates the need for expensive gantries, laser scanners, or specialized rigs. Standard smartphones provide sufficient image quality for forensic-grade defect assessment when paired with the right algorithms.

Claims leaders frequently ask practical questions about adopting advanced AI inspection technologies. The answers below address accuracy improvements, implementation pathways, and real-world deployment considerations that matter most for claims operations.

Panel segmentation taxonomy provides precise defect mapping that identifies exact affected areas rather than general regions. Research shows segmentation methods deliver essential accuracy for damage assessment. When combined with known 3D vehicle geometry, this approach enables millimeter-scale localization with mean errors under 1.1mm for precise anomaly positioning.

Standardized panel identification streamlines claim handoffs from FNOL through settlement while reducing manual rework. The taxonomy enables consistent defect reporting across vehicle makes and models using standard smartphone cameras. Advanced AI validates detections against geometric constraints, reducing false positives and supporting audit-ready documentation for insurance workflows.

Implementation starts with defining panel granularity that matches repair decisions and aligning naming conventions with OEM standards. Hybrid AI combining neural networks with ontological validation creates unique digital fingerprints of damage patterns. This approach enables precise matching across images and time periods, helping identify inconsistencies in submitted photos.

Dataset limitations represent the primary obstacle, with most high-quality training data remaining private. Companies also face annotation complexity requiring specialized expertise and significant time investment. Additional hurdles include integrating with existing claims systems and training staff on new workflows while maintaining regulatory compliance.

Most insurers observe measurable improvements within three to six months of deploying panel-based taxonomy systems. The structured approach to damage identification reduces manual review time by enabling automated panel-to-repair mapping. Systematic damage classification combined with precise panel taxonomy typically accelerates settlement cycles and reduces disputes within the first year.

A structured panel segmentation taxonomy tied to known 3D vehicle geometry transforms how insurers handle damage assessment. This approach delivers objective measurements that reduce manual back-and-forth between adjusters and field teams. Recent research shows 3D-enabled systems can cut human review requirements by 35% while improving estimate accuracy by 15%.

To achieve these benefits consistently, Click-Ins combines hybrid AI, prebuilt 3D vehicle geometry, and ontological validation to deliver instant, audit-ready inspections from smartphone photos. This integration of insurer claims efficiency with panel taxonomy helps minimize fraud risk while accelerating FNOL-to-settlement workflows.

Discover how automated damage detection can streamline your claims process. Request a demo of our Insurance Industry solution today.